Introduction

Warning

We assume no responsibility for any illegal use of the codebase. Please refer to the local laws regarding DMCA (Digital Millennium Copyright Act) and other relevant laws in your area.

This codebase is released under the BSD-3-Clause license, and all models are released under the CC-BY-NC-SA-4.0 license.

Requirements

- GPU Memory: 4GB (for inference), 8GB (for fine-tuning)

- System: Linux, Windows

Windows Setup

Windows professional users may consider WSL2 or Docker to run the codebase.

Non-professional Windows users can consider the following methods to run the codebase without a Linux environment (with model compilation capabilities aka torch.compile):

- Unzip the project package.

- Click

install_env.batto install the environment.- You can decide whether to use a mirror site for downloads by editing the

USE_MIRRORitem ininstall_env.bat. USE_MIRROR=falsedownloads the latest stable version oftorchfrom the original site.USE_MIRROR=truedownloads the latest version oftorchfrom a mirror site. The default istrue.- You can decide whether to enable the compiled environment download by editing the

INSTALL_TYPEitem ininstall_env.bat. INSTALL_TYPE=previewdownloads the preview version with the compiled environment.INSTALL_TYPE=stabledownloads the stable version without the compiled environment.

- You can decide whether to use a mirror site for downloads by editing the

- If step 2 has

USE_MIRROR=preview, execute this step (optional, for activating the compiled model environment):- Download the LLVM compiler using the following links:

- LLVM-17.0.6 (original site download)

- LLVM-17.0.6 (mirror site download)

- After downloading

LLVM-17.0.6-win64.exe, double-click to install it, choose an appropriate installation location, and most importantly, checkAdd Path to Current Userto add to the environment variables. - Confirm the installation is complete.

- Download and install the Microsoft Visual C++ Redistributable package to resolve potential .dll missing issues.

- Download and install Visual Studio Community Edition to obtain MSVC++ build tools, resolving LLVM header file dependencies.

- Visual Studio Download

- After installing Visual Studio Installer, download Visual Studio Community 2022.

- Click the

Modifybutton as shown below, find theDesktop development with C++option, and check it for download.

- Install CUDA Toolkit 12

- Download the LLVM compiler using the following links:

- Double-click

start.batto enter the Fish-Speech training inference configuration WebUI page.- (Optional) Want to go directly to the inference page? Edit the

API_FLAGS.txtin the project root directory and modify the first three lines as follows:--infer # --api # --listen ... ... - (Optional) Want to start the API server? Edit the

API_FLAGS.txtin the project root directory and modify the first three lines as follows:# --infer --api --listen ... ...

- (Optional) Want to go directly to the inference page? Edit the

- (Optional) Double-click

run_cmd.batto enter the conda/python command line environment of this project.

Linux Setup

# Create a python 3.10 virtual environment, you can also use virtualenv

conda create -n fish-speech python=3.10

conda activate fish-speech

# Install pytorch

pip3 install torch torchvision torchaudio

# Install fish-speech

pip3 install -e .

# (Ubuntu / Debian User) Install sox

apt install libsox-dev

Changelog

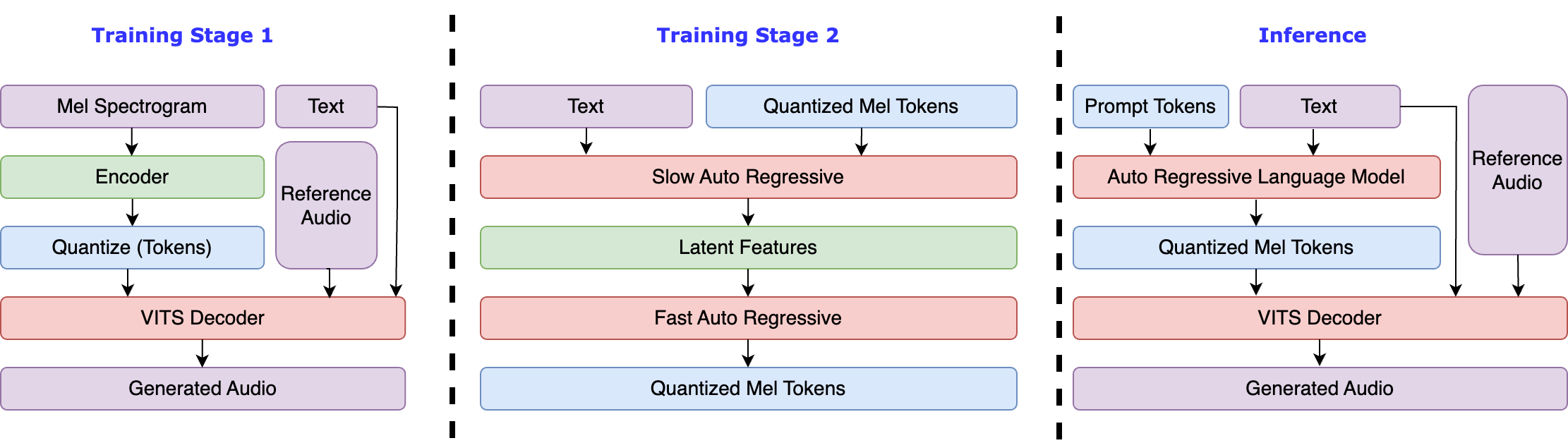

- 2024/07/02: Updated Fish-Speech to 1.2 version, remove VITS Decoder, and greatly enhanced zero-shot ability.

- 2024/05/10: Updated Fish-Speech to 1.1 version, implement VITS decoder to reduce WER and improve timbre similarity.

- 2024/04/22: Finished Fish-Speech 1.0 version, significantly modified VQGAN and LLAMA models.

- 2023/12/28: Added

lorafine-tuning support. - 2023/12/27: Add

gradient checkpointing,causual sampling, andflash-attnsupport. - 2023/12/19: Updated webui and HTTP API.

- 2023/12/18: Updated fine-tuning documentation and related examples.

- 2023/12/17: Updated

text2semanticmodel, supporting phoneme-free mode. - 2023/12/13: Beta version released, includes VQGAN model and a language model based on LLAMA (phoneme support only).